Demand for AI ASICs and SSDs is booming! Maywell Technology (MRVL.US) enjoyed the “AI Reasoning Dividend” and operating profit soared 72%

The Zhitong Finance App learned that it focuses on customized AI chips (AI ASICs) for large-scale AI data centers, and Maywell Technology (MRVL.US), which is one of the largest partners in Amazon's AWS Trainium series AI ASIC, announced the overall performance report and future outlook that surpassed Wall Street expectations after the US stock market closed on March 6, Beijing time. The extremely strong performance and outlook recently announced by Maywell, combined with the explosive growth performance data of the larger AI ASIC dominator Broadcom (AVGO.US) from the previous day, can be said to have a strong impact on Nvidia's AI chip monopoly with a strong market share of nearly 90%, under the continued strong demand for memory chips, a surge in demand for cloud AI inference computing power, and a “micro-training” trend focusing on embedding AI models into business operations.

According to financial data, Maywell Technology's revenue for the fourth quarter of the 2026 fiscal year ending January 31 was about US$2.22 billion, a record high of revenue data, achieving a year-on-year increase of more than 20%, which is slightly higher than the average forecast of Wall Street analysts of about US$2.21 billion. Adjusted earnings per share (non‑GAAP EPS) for the fourth fiscal quarter were $0.80, exceeding Wall Street's average expectations of about $0.79 and $0.60 for the same period last year. Operating profit under GAAP guidelines reached US$404.4 million, representing a significant year-on-year increase of 72%, higher than Wall Street average expectations; net profit attributable to common shareholders during the same period was approximately US$396.1 million, which meant a sharp increase of about 97.9% year-on-year.

Among them, the data center business, which is closely linked to the AI training/inference supersystem, contributed about US$1.65 billion to Maywell Technology's revenue, accounting for about 74% of total revenue, achieving a year-on-year increase of about 21%, and a 9% month-on-month increase based on the strong base of the previous quarter. The company emphasized in its earnings statement that orders for its data center business are growing at a “record rate.” After the financial report was announced, Maywell Technology's stock price once surged by more than 15% after the US stock market.

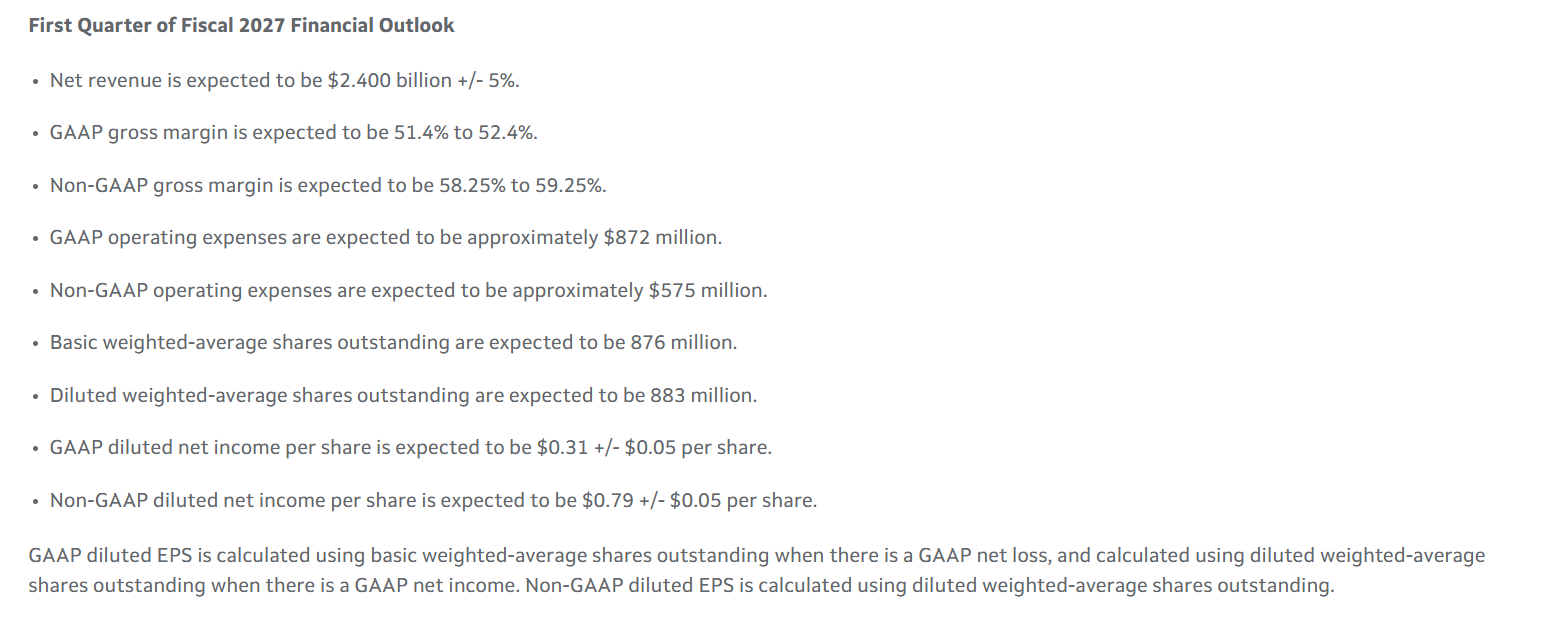

In terms of market-focused performance outlook, CEO Maywell expects this fiscal year's revenue to “further accelerate” at the level of year-on-year growth. The median revenue guidance for the first quarter of fiscal year 2027 given by Maywell Technology's management is about US$2.4 billion, which is significantly higher than analysts' average expectations of about US$2.27 billion — we need to know that this performance forecast has been continuously raised since the end of January, when US supertech giants such as Google, Amazon, and Nvidia announced strong results. Even so, the official outlook given by Maywell is still stronger than analysts' expectations after continuous improvement. It's enough to see how the AI computing power infrastructure demand for the global AI ASIC technology route has exploded. Military Broadcom's performance will still be on an “Nvidia-style” explosive growth trajectory since 2024.

The company gave an adjusted earnings per share range of $0.74 to $0.84 under the non-GAAP benchmark. The median range was significantly higher than the average expectations of Wall Street analysts, while the expected range of non-GAAP gross margin was 58.25% to 59.25%, which was also higher than analysts' average expectations.

On the day before Maywell Technology announced strong performance and future prospects, the performance data released by AI ASIC superleader Broadcom showed that Broadcom's total revenue increased to US$19.3 billion, representing a sharp increase of 29% year over year. Broadcom said that revenue closely related to AI doubled during this period to reach 8.4 billion US dollars, far faster than the company's previous expectations. The Q1 semiconductor solution revenue, which includes the revenue of semiconductor businesses such as AI ASIC and smartphone RF chips, reached 12.515 billion US dollars, a sharp increase of 52% year over year.

In the Broadcom performance report mentioned above, the most important thing is that the CEO of Broadcom said that next year, revenue related to “AI chips” related to AI ASICs will exceed 100 billion US dollars. Broadcom's latest revenue target of $100 billion related to “AI chips” includes not only revenue from “AI ASIC computing power clusters” that compete fiercely with AI GPUs led by Nvidia, but also revenue from AI networking chip products — that is, high-performance Ethernet switch chips. Therefore, the performance of Broadcom and Maywell, the two ASIC leaders can be said to have jointly strengthened this “AI ASIC bull market narrative logic”: as cloud computing giants such as Google, Amazon, and Microsoft launch an “AI computing power cost revolution” to accelerate the scale of AI ASIC penetration, the core competition in the inference era is no longer just “peak computing power,” but the cost of each token, power consumption, memory bandwidth utilization, interconnection efficiency, and total cost of ownership after software and hardware collaboration. On these core indicators, data streams, and compilers customized for specific workloads ASICs are naturally easier than general-purpose GPUs to achieve high cost performance.

The target price compiled by TIPRANKS shows that on Wall Street, analysts are extremely optimistic about the revenue prospects for Maywell Technology's AI chip and SSD memory chip control business — the analyst consensus rating is “strong buy”. The average target share price for the next 12 months is as high as 118 US dollars, which means that the potential upward space for the stock price is as high as 56%.

AI ASIC and SSD storage master control chip join forces to drive Maywell's performance soar

From the perspective of the current global superwave of AI infrastructure construction, Maywell Technology's strong performance mainly benefits from the full explosion in demand for data center infrastructure semiconductors (especially customized AI ASICs/high-performance communication and control chips/data center level eSSD storage master control chips). Maiwell Technology's strong revenue growth trajectory in recent years is largely due to the data center business, especially products such as AI ASIC customized AI chips, high-bandwidth network chips, interconnection solutions, and SSD storage controllers for cloud computing service providers and supercomputing platforms. Such products are inseparable from AI training/inference platforms. Its share of data center revenue continues to rise, and the growth rate is significantly higher than that of the company as a whole.

Maywell Technology has long focused on accelerating the update of iteratively customized AI ASIC chip technology, network processors (DPUS/NPUs), SSD main controllers, and high-bandwidth interconnect products. Demand for these products in large-scale AI model training, inference tasks, and large-scale data stream storage and processing is growing rapidly as the global demand for AI computing power expands exponentially. Customized silicon wafer design for hyperscale data center customers is no longer an edge business, but one of the core growth engines for global chip companies.

Amazon AWS officially positions its AI ASIC computing power cluster, Trainium/Inferentia, which was created in collaboration with Maywell as a dedicated accelerator for generative AI training and inference. Among them, Trainium2 provides about 30% to 40% better price performance compared to its AI GPU cloud instance; and Google also publicly stated recently that 100% of Gemini 2.0's training and inference runs on TPU. These indicate that “large-scale cloud computing companies develop their own commercial ASICs to undertake core model training/inference” is no longer a proof of concept, but is entering a replicable industrialization stage.

Broadcom and Maywell's recent strong financial reports are sufficient to show that AI ASIC's unprecedented strong growth logic is being quickly confirmed by “financial-level evidence.” The generative AI boom that has taken the world by storm has accelerated the AI chip development process of cloud computing and chip giants. They are vying to design the fastest and most energy-efficient AI computing power infrastructure clusters for advanced large-scale AI data centers. Broadcom and its biggest competitor, Maywell, mainly focus on using its absolute advantage in high-speed connectivity and chip IP to work with cloud computing giants such as Amazon, Google, and Microsoft to create AI ASIC computing power clusters tailored to the specific needs of their AI data centers. This ASIC business has grown into a very important business for both companies. For example, the TPU AI computing power cluster built by Broadcom and Google is one of the most typical AI ASIC technology routes.

Maywell's strong performance, combined with the performance previously announced by Samsung, SK Hynix, and Micron, the three original memory chip manufacturers of the “storage supercycle”, jointly highlighted that high-performance memory controllers/SSD main control chips continue to be the core driving force for “hidden computing power.” In large model training/inference systems, I/O bandwidth, persistent storage access efficiency, and memory pool interconnection efficiency also constrain overall training costs and performance. Maywell Technology's SSD master control chips, NVMe/CXL cache controllers, and high-bandwidth storage interconnection product lines are important components of growing band demand. Although these highly specialized control ASICs are not as conspicuous as the exponentially expanding AI ASIC business, they are essential for data state processing for AI models with large parameters, directly driving system efficiency and service quality at the data center level.

From the perspective of cross-analysis of semiconductor and AI data center infrastructure, the reason why SSD memory chips are a “perfect card slot” is a huge wave of AI is because they simultaneously eat the two main lines of training expansion and inference expansion, and it is also a “general toll booth” across platforms, architectures, and ecosystems. As the AI era shifts from training to inference, agents, long contexts, and enhanced retrieval, the system's demand for capacity, bandwidth, power efficiency, and data persistence layers will only increase. HBM has never been the only storage system that AI data centers are extremely dependent on. According to organizations such as J.P. Morgan Chase, NVMe eSDs, which focus on the enterprise storage layer, are experiencing unprecedented structural growth as AI workloads shift from training to inference and HDD supply bottlenecks in the near-line storage sector.

Driven by extremely strong demand for artificial intelligence data centers, DRAM/NAND series storage prices will continue to soar. BNP PARIBAS (BNP PARIBAS) recently released a research report stating that DRAM contract prices are expected to rise 90% month-on-month in the first quarter of 2026, while NAND, which has long been known for its stable price curve, is expected to rise sharply by 55%, and the second quarter will continue the price increase trajectory since the second half of 2025.

Bank France and Pakistan's judgment on the rise in storage prices is not an isolated opinion. TrendForce recently raised the regular DRAM contract price forecast for the first quarter of 2026 to +90% to +95% (QoQ, or month-on-month benchmark), and the NAND Flash contract price was drastically raised to +55% to +60% QoQ, and pointed out that North American cloud computing companies' demand for enterprise SSDs (that is, data center enterprise-grade SSDs, eSDs) has surged, driving its price to expand further in the first calendar quarter, which is expected to rise 53% to 58% QoQ.

As the big wave of AI reasoning sweeps the world, the golden age of AI ASICs has arrived

Matt Murphy, CEO of Mywell Technology, said in a performance statement that the custom chip design giant won a record number of customer-specific chip design orders in the 2026 fiscal year, and he expects this situation to continue. Murphy said overall revenue for this fiscal year is expected to “further accelerate” year-on-year growth due to “continued strong growth in the data center business.” He also added that bookings for the data center business are growing at a “record rate”.

The AI training side, which is almost monopolized by Nvidia's AI GPUs, requires more powerful AI computing power clusters and the ability to quickly iterate the entire computing power system, while the AI inference side places more emphasis on cost, latency, and energy efficiency per token after large-scale implementation of cutting-edge AI technology. For example, Google clearly positions Ironwood as a TPU generation “born for the AI inference era,” and emphasizes the cost performance ratio and scalability of performance/energy efficiency/computing power clusters. However, Amazon's latest actions prove that AI ASICs may have strong potential to train large models.

The AI ASIC computing power system will undoubtedly continue to weaken Nvidia's monopoly premium and part of the market share in the medium to long term, rather than replace the GPU system linearly. The underlying reason is that the core competition in the era of reasoning is no longer just “peak computing power,” but rather cost per token, power consumption, memory bandwidth utilization, interconnection efficiency, and total cost of ownership after software and hardware collaboration. In terms of such metrics, ASICs for data streams, compilers, and interconnects tailored to specific workloads are naturally more cost-effective than general-purpose GPUs. What is more likely to happen in AI data centers in the future: cutting-edge training and generalized cloud computing power will continue to be dominated by GPUs, while large-scale internal inference, agent workflows, and fixed high-frequency loads will accelerate the shift to ASICs, and data centers will enter an era of truly heterogeneous computing power.

In the era of cutting-edge training, what is most needed in the AI field is versatility, software maturity, and rapid adaptation to new model structures, so GPUs naturally dominate; however, when the industry begins to move from “scarce training” to “large-scale inference, agentization, long context, and low latency,” the core KPI will completely shift from “maximum peak computing power” to cost per token, throughput per watt, and system-level TCO. This is the root cause of the collective acceleration of hyperscalers (cloud computing supergiants) ASIC.

For example, Google clearly defines Ironwood TPU as the best computing power cluster for the “inference era” and can be expanded to 9,216 chips; Microsoft directly positions the newly launched AI ASIC Maia 200 as an accelerator for cloud computing and claims to achieve 30% more powerful performance per dollar than its latest generation hardware; AWS defines Trainium3 as a chip that pursues “optimal token economics”, focusing on 4 times more energy efficiency The increase also shows that as cloud computing giants launch an “AI computing power cost revolution” to advance the scale of AI ASIC penetration, the market is right to worry about Nvidia's growth prospects.

Nasdaq

Nasdaq Wall Street Journal

Wall Street Journal